Subscribe to the newsletter "From Sputnik With News"

Most Read

N. Korea: Battlefield in Ukraine Became 'Graveyard' of Weapons Bragged About by US, NATO

Today

NATO Maneuvers Near Russian Borders Raise Risks of Possible Military Incidents - ZakharovaToday

Situation on Battlefield Clear, New Military Aid to Ukraine to Not Change Dynamics - KremlinToday

US Can’t Hide ‘Dollar Diplomacy’ With ‘Hypocritical Claims’ of Non-Interference in Solomons’ VoteYesterday

S. Korea, US Conduct Joint Space Drills Against Perceived Threats From N. Korea - ReportsToday

Editor's Pick

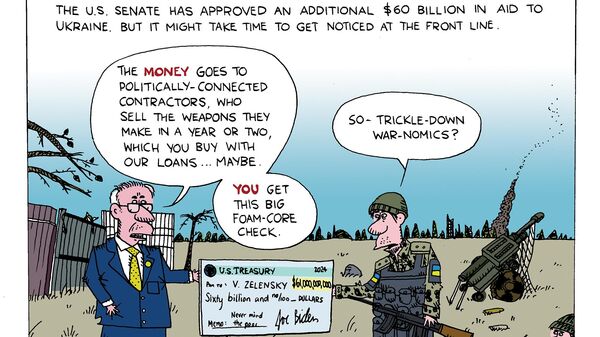

51% of Americans Unhappy With Congress Voting $61bn to Ukraine - Reports

Yesterday

DC Think-Tank: Threat of Iran-Israel War Still PresentYesterday

Why Russia's Approach to Artificial Intelligence May Save Civilization22 April

‘Two Birds With One Stone’: Biden Targets Radical IDF Unit With Sanctions to Placate His Voters22 April

Expansive US-Philippines War Games Slammed by China as Tension-Stoking & Muscle-Flexing22 April

Tune In

University Encampments Spread, UK Deportation Plans, VW Unionization Neocons Push Nuclear Confrontation; Government Crackdown on Protesters Ukraine, Israel Funding Takes Precedence Over US Border in Latest Congressional Fiasco House GOP Cowers: Nearly $100 Billion for Foreign Wars, Zero for Border House Passes Ukraine Aid, Students Act For Gaza, Biden Climate Record