"We wanted to know how small we could shrink the amount of energy needed for computing," explained Jeffrey Bokor, professor of electrical engineering and computer sciences at the University of Berkeley and senior author of a paper about the breakthrough published in the journal Science Advances on Friday.

"The biggest challenge in designing computers and, in fact, all our electronics today is reducing their energy consumption."

The scientists were looking for a new way to lower the energy use of computer chips, which are currently silicon-based and are packed with transistors that rely on electric currents, or moving electrons, that generate a lot of waste heat.

"Making transistors go faster was requiring too much energy," said Bokor.

"The chips were getting so hot they'd just melt."

In an effort to decrease energy use, researchers are turning their focus to alternatives to transistors, following decades of microprocessor development that has had a focus on packing greater numbers of increasingly tiny and faster transistors onto the chips.

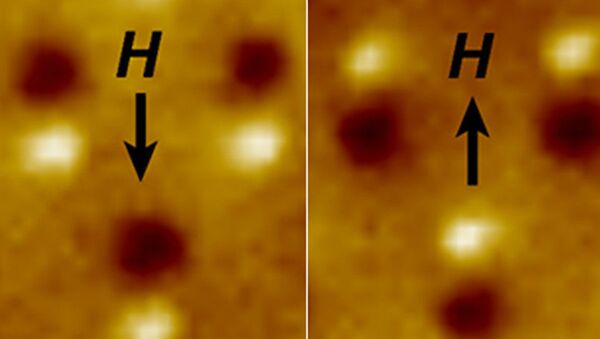

Chips that use tiny bar magnets are one promising alternative to conventional transistors, as they require no moving electrons and expend less energy.

The study from the scientists at Berkeley has shown that these magnetic chips can operate with the lowest fundamental level of energy dissipation possible under the laws of thermodynamics.

This amount is consistent with the Landauer limit, a theory which states that in any computer, each single bit operation must expend an absolute minimum amount of energy.

"Although experimental tests of Landauer’s limit have previously been performed using trapped microbeads, our result using a completely different physical system confirms its generality and, in particular, its applicability to practical information processing systems," the scientists wrote in their paper.

The advance is particularly important for mobile devices requiring powerful processors that can last a day or more on small, lightweight batteries, while the increase in cloud computing also demands greater electricity for the increasing number of giant cloud data centers.

"The significance of this result is that today’s computers are far from the fundamental limit and that future dramatic reductions in power consumption are possible."

"Given that power consumption is the key issue that limits the continued improvement in digital computers, the result has profound suggestions for the future development of information technology," the paper concluded.

According to Moore's Law, over the history of computing hardware, the number of transistors in a dense integrated circuit has doubled approximately every two years, reflecting an observation made by Intel co-founder Gordon Moore in 1965. Although the pace slowed last year according to Intel, the new magnetic technology offers hope that advances in computer processing will continue.