With recent research describing human emotion as the byproduct of learning, chances are that machines with artificial brains may become depressed or worse if they ever want to think or feel, The Next Web tech blog wrote.

Zachary Mainen, a neuroscientist at the Champalimaud Center for the Unknown in Lisbon, believes that depression and hallucinations depend on a chemical in the brain called serotonin.

“If serotonin is helping solve a more general problem for intelligent systems, then machines might implement a similar function, and if serotonin goes wrong in humans, the equivalent in a machine could also go wrong,” Dr. Mainen said, while speaking at a symposium on the implications of recent experiments to learn how serotonin affects people’s decision making.

To find this out, scientists conducted a series of experiments. By manually activating serotonin production in mice running around in a field, they observed that the rodents would slow down and consider the situation almost immediately after a spike.

Injecting the same mice with a serotonin inhibitor, Mainen and his team found that learning became delayed, even more so when the animals were not able to naturally release serotonin.

Scientists also believe that that the use of hyper-modulators, like serotonin, could prevent autonomous systems from becoming stuck in outdated models.

READ MORE: Never Mind Sci-Fi, Malicious AI is a Real Threat and ‘We're Not Prepared'

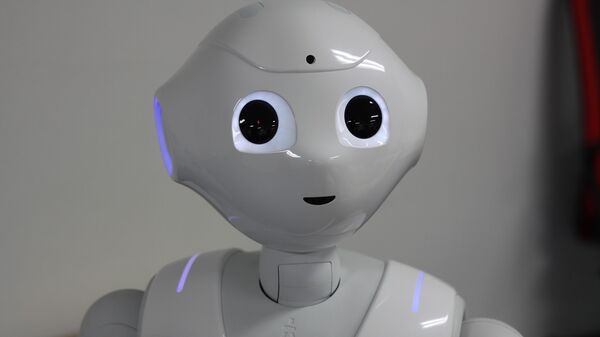

Designed to deal with a static environment using supervised learning, “thinking” robots will hardly be able to adapt to the constantly changing world around them.

That being said, giving them feelings and an ability to hallucinate things that aren’t really there, doesn’t look like a good idea either.