Dr. Robert Epstein — The pixels have hit the fan. The EU just fined Google $2.7 billion for favoring its online comparative shopping service in its search results.

Google officials knew this fine was coming and that much worse is possible, so in August 2015, they reorganized the company so that it is now part of a holding company called Alphabet. This was not done, as Larry Page, one of the company's co-founders, rapped at the time, to make the company "cleaner and more accountable" (what on earth does that mean?). It was likely done to try to protect the value of the stock held by the company's major stockholders. The EU's antitrust action against Google had been filed in April, 2015, and that got Google officials thinking. When the US Department of Justice broke up AT&T in the 1980s, the stock value dropped by 70 percent.

Google officials are nervous because they know exactly how many questionable practices they engage in every day, along with how many have been uncovered so far and how many are still unknown to authorities. My associates and I have discovered some of these practices, and we study them every day. They are brilliant, mind-blowing, and largely invisible new ways of both tracking and manipulating human behavior on an unprecedented scale, all serving a singular purpose: to make Google richer. Before I give you a few examples of the practices we are examining these days, let me put the big EU fine into a broader context.

First of all, Google can handle it. The company will likely have revenues of over $100 billion this year, so they can pay the fine painlessly, and they also have unlimited legal resources. In court, they will claim, as they always do, that they haven't done anything wrong, that it's just the algorithm, and that the algorithm — in its objective purity, driven by its deep digital desire to serve human needs — just happens to rank Google products above inferior ones.

This is complete nonsense. As I explain in detail in my US News essay, "The New Censorship," Google employees have complete control over where items occur in search results. The search algorithm is just a set of computer instructions written by Google software engineers, and they manually adjust the algorithm daily to remove items from the search results it generates about — 100,000 items per year under Europe's "right to be forgotten" law alone — or to demote companies that piss Google off.

Second, this hefty fine is just the tip of a very large digital iceberg. Bear in mind that it is based on merely one instance of search bias: putting Google's own comparative shopping service ahead of others. A US Federal Trade Commission investigation in 2012 found that Google's search results are generally skewed to favor its own products and services. When was the last time you Googled a movie without seeing YouTube — owned by Google — in the top search result? Both India and Russia have levied fines against the company for rigging search results, with a much larger fine still looming in India. Bear in mind also that the EU's search-related action against Google is just one of three antitrust cases they have initiated so far; the other two concern Google's dominance in mobile computing and advertising. Europe's concerns about Google are so deep that in late 2014 the European Parliament voted (in a non-binding proceeding) to break Google up into pieces, reminiscent of the DOJ's dismantling of AT&T.

These and other legal actions are all about new techniques Google has developed for tracking and manipulating people. The search engine may have started out as a simple index of web pages, but it was soon refined and repurposed. Its main purpose became to track user behavior, yielding a vast amount of information about people that Google still leverages to send out the targeted advertisements that account for most of the company's income. The public still thinks of the Google search engine, Google Maps, Google Wallet, YouTube, Chrome, Android and a hundred other Google platforms as cool services the company provides free of charge. In fact, they are all just gussied-up surveillance platforms, and authorities around the world are finally figuring that out.

As the EU's recent antitrust decision shows, authorities are also beginning to figure out how extensively Google is using its platforms to suppress competition and manipulate user behavior. The EU's investigation found, for example, that when Google officials realized in 2007 that their comparative shopping service was failing, they elevated their own service in their search results while demoting competing services. This increased traffic to its service "45-fold in the United Kingdom, 35-fold in Germany, 19-fold in France, 29-fold in the Netherlands, 17-fold in Spain and 14-fold in Italy" while reducing traffic to its competitors by "85% in the United Kingdom, up to 92% in Germany and 80% in France."

Does position in search results really affect user behavior that much? You bet. My own research has shown, for example, that favoring one political candidate in search results can shift the voting preferences of undecided voters by up to 80 percent in some demographic groups. Search results that favor one perspective over another — on abortion, fracking, homosexuality, you name it — also dramatically shift the opinions of people who haven't yet made up their minds. The research also shows, unfortunately, that this type of manipulation is virtually invisible to people and, worse still, that the few people who can spot favoritism in search results shift even farther in the direction of the bias, perhaps because they see the bias as a kind of social proof.

What if authorities were examining not just the dominance of Google's comparison shopping service in the company's search results but the dominance of, say, anything, in those results: certain brands of mobile phones or computers; political candidates who serve or interfere with the company's needs; attitudes toward Oracle, Microsoft, Yahoo, and other companies that compete with or are in conflict with Google; news stories that are "fake" or anti-Trump or pro-Google; and on and on. Do you see how big this problem really is? And no one — at least not yet — is tracking any of this. The trillions of pieces of information Google is showing people every day are all ephemeral. They hit your eyeballs and then disappear, leaving no trace, and much or most of them favor one perspective or another. Do we really want a single company, which handles 90 percent of search in most countries, to have the power to manipulate our opinions about anything? How, over the years, has Google been exercising this power?

My newest research is showing that it is not just the order of search results we need to worry about. Here are three examples of manipulations we are currently studying which, once again, no authorities are tracking — at least not yet:

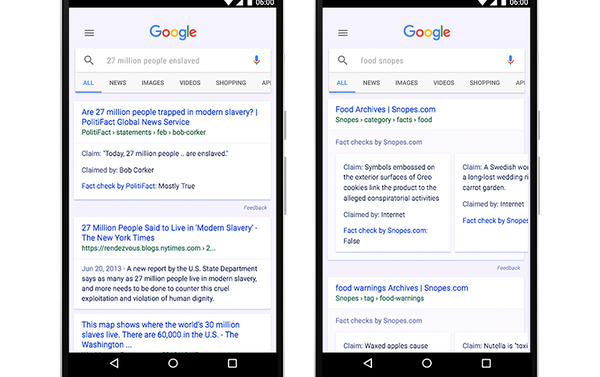

- Search suggestions: Before you even see those search results, Google typically flashes search suggestions at you. When Google introduced this feature in 2004, they showed you a long list of suggestions — usually 10 — that indicated what other people were searching for; Bing and Yahoo still do this. Google, however, now typically shows you just four suggestions that are often unrelated to what others are searching for. Instead, they show you terms they believe you are likely to click, which gives them a great deal of control over your search. One way they now manipulate searches is by strategically including or withholding negative search terms. Negative terms (like "suicide" or "crimes") attract far more clicks than neutral or positive ones do — 10-to-15 times as many in some demographic groups. By withholding negative suggestions for a perspective or person the company supports while allowing negatives to appear for a person or perspective the company dislikes, they drive millions of people to view material that shifts opinions in ways that serve the company's needs. Four, it turns out, is the magical number of suggestions that maximizes their control. It maximizes the power of the negative search term to draw clicks while also minimizing the likelihood that people will type their own search term.

- I'm feeling lucky: When you mouse over a Google search suggestion, you see a small "I'm feeling lucky" link. With this feature, Google gets people to skip seeing search results altogether; it gives the company complete control over the actual web page you see. By limiting the number of suggestions you see and then attracting you to the "lucky" link, they exert a high degree of control over what opinion you will form on issues you're uncertain about. All of this occurs without users having any awareness of how they are being manipulated.

- The featured snippet: Google is rapidly moving away from the search engine model of tracking and manipulation toward much more powerful means. (To view a satire I wrote about Google donating its search engine to the American public, click here.) The "featured snippet" — the answer box we see more and more frequently above the search results — is one such tool we are studying. Google officials have long known that people don't really want to see a list of 10,000 search results when they ask a question; they just want the answer. That's what the snippet is now giving people — the answer, wrong or right, and it's often wrong. In one of our newest experiments, the voting preferences of undecided voters shifted by 36.2 percent when they saw biased search rankings without an answer box, but when a biased answer box appeared above the search results, the shift was an astounding 56.5 percent. In other words, when you give people the answer, you have an even larger impact on their opinions, purchases, and voting preferences. Google is rapidly shifting to this new model of influence not just on its search engine, but with its new audio Home device ("Okay Google, what's the best Italian restaurant around here?"), as well as with its new Android-based Google Assistant.

It took years for the EU to collect and analyze the terabytes of data it needed to make a case against Google in the shopping services action. Meanwhile, Google is moving light years ahead. This might always be a problem when it comes to the machinations of high-tech companies. Laws and regulations will necessarily lag way behind — unless — unless, that is, we change the game.

As The Washington Post and other media outlets reported in March 2017, about six months before the November 2016 election in the US, my associates and I deployed a Nielsen-type system for tracking search results in real time. Using custom software and a nationwide network of anonymous field agents, we were able to look over the shoulders of people as they conducted a wide range of election-related searches using Google, Bing, and Yahoo, ultimately preserving the first page of results from 13,207 searches and the 98,044 web pages to which the search results linked. We found that these searches, especially the ones conducted on Google, generally favored Hillary Clinton over Donald Trump in all ten search positions on the first page of search results. Perhaps more important, we learned that our monitoring system could be used to track any of the ephemeral stimuli that Google and other tech companies are showing us every day: news feeds, advertisements, you name it.

I am now working will colleagues from Stanford, Princeton, King's College London and a dozen other institutions to create an organization that will monitor the online behavior of Big Tech companies worldwide on a real-time basis. If we do this right, it will take only seconds, not years, to spot illegal or unethical behavior, and we might even be able to anticipate manipulations before they occur, providing evidence on an ongoing basis to journalists, regulators, legislators, law enforcement officials, and antitrust investigators. Such a system will force Big Tech companies, both now and in the future, to be more accountable to the public, and it will also help preserve the free and fair election.

In the meantime, my advice to consumers is: be wary of the information you obtain online and, more important, be cautious about the information you reveal. Learn how to increase your online privacy; it's not that hard.

And my advice to Google officials is: cut down on the greed and arrogance. The wheels of justice turn slowly, but they do turn.

_______________________

A Ph.D. of Harvard University, Robert Epstein is senior research psychologist at the American Institute for Behavioral Research and Technology, the author of 15 books, and the former editor-in-chief of Psychology Today magazine. Follow him on Twitter @DrREpstein.